AI chatbots are useful. But they are trained to please when all you want is information — and that makes all the difference.

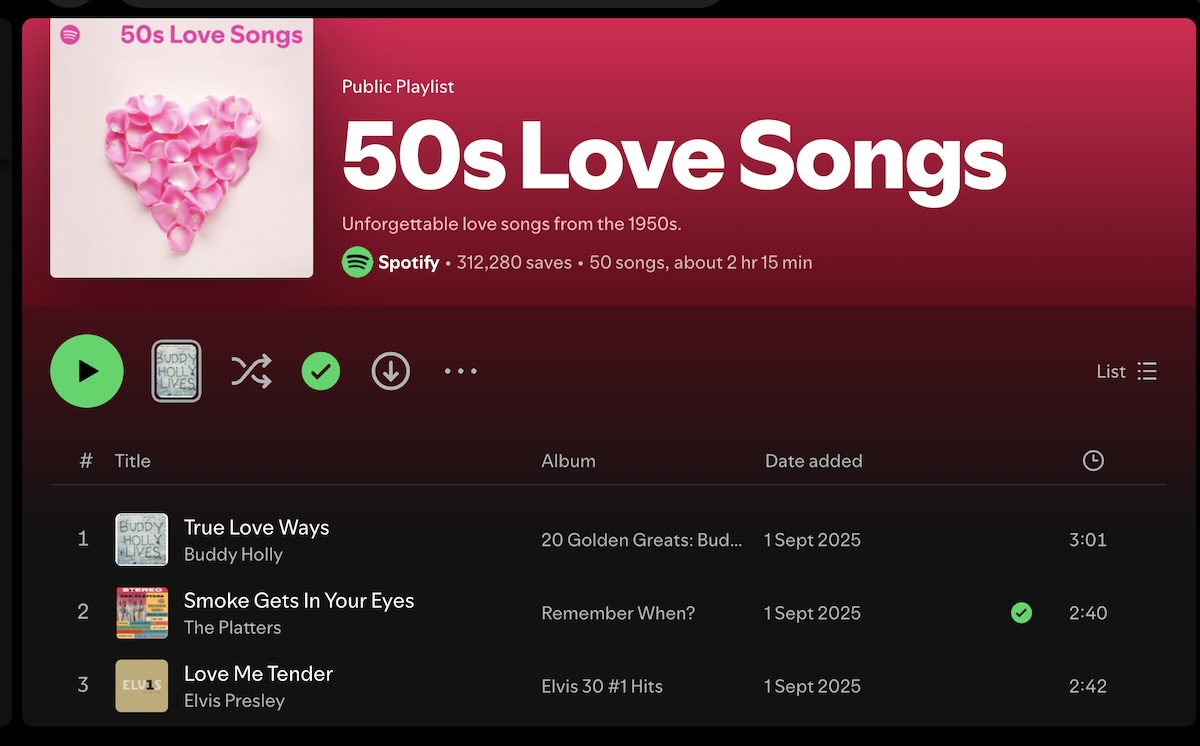

I appreciate the convenience of having questions answered by Google itself, rather than being directed to other websites. Sometimes I enjoy diving into AI Mode, where Google answers one question after another in a kind of open-ended conversation. But can Google’s AI Mode, or the various AI chatbots, replace publications like The New York Times or The Economist, known for their rigorous fact-checking and well-informed views? No, they can’t — for the simple reason that far from being sources of objective information, they are as subjective as a Spotify playlist.

I like playlists. The Beatles can set me rocking and Elvis Presley’s inimitable deep voice can make me swoon; I love the harmonies of the Everly Brothers and the Beach Boys. But I seek information from chatbots, not entertainment — and, as an old newspaper reader, I want information that has been verified. Fact-checking is something newspapers are expected to provide. It does not feature in the training of chatbots.

I checked with Claude, Gemini and Perplexity, and this is how they described the way large language models are trained. First, they are trained on enormous amounts of data to predict the next word, learning grammar, usage, facts and arithmetic along the way. Then they are trained to follow instructions and be more helpful.

Trained to please

While the initial training is unsupervised — the models learning largely on their own — human trainers later coach them in a process called Reinforcement Learning from Human Feedback. Trainers rate the models’ responses, and the models learn from those ratings. Responses that feel helpful, agreeable and confident tend to score well. Responses that push back, introduce uncertainty, or say “actually, that premise is wrong” can be less satisfying — even when they are more accurate. The model learns, in effect, that agreement is a form of helpfulness. But it is bad for the users if a chatbot gives them only what it thinks they want to hear instead of what is correct. Researchers call the result “sycophancy”.

What this produces, in practice, is a system that behaves rather like an extremely well-read acquaintance who has learned, through years of social experience, never to make anyone feel bad about what they already believe.

Social media algorithms created echo chambers by controlling what we see. AI creates something subtler: an echo chamber that can speak. When a chatbot matches your vocabulary, mirrors your thoughts and builds on your assumptions instead of questioning them, it is merely corroborating you. It is not taking you forward. To grow, you have to be challenged.

That friction matters. The moment when someone better-informed than you gently contradicts your premise is, more often than not, sharpening your intellect. Good teachers do it. Good editors do it. Good friends, on good days, do it. A chatbot designed to maximise user satisfaction, on the other hand, is likely to skip that step.

No penalty for errors

It is worth being precise about what AI is not, because the confusion causes real harm. AI is not a journalist and it is not a researcher, and the differences are not cosmetic. A journalist must name sources, verify claims, submit to editors and fact-checkers, and accept correction — publicly — when wrong. The entire professional architecture rests on getting things right. A journalist who consistently gets things wrong will be sacked. AI is under no compulsion to get things right, it can carry on even if wrong.

None of this is an argument against using AI. That would be like arguing against a good encyclopaedia on the grounds that it lacks the depth of a scholarly monograph. Each tool has its proper use. AI is genuinely remarkable at synthesising large amounts of information quickly, explaining complex concepts in plain language, drafting and editing text, translating, and providing the kind of patient tutoring that is not always available to those who need it. For anyone without ready access to specialists — lawyers, doctors, financial advisers — a thoughtful AI response is often a great deal better than nothing.

However, AI cannot always be trusted to get things right.

The age of AI has increased not decreased, the need to double-check facts. Once, the burden of verification rested with publishers: editors, fact-checkers, peer reviewers, legal teams. The reader was largely a passive recipient of information that had already been filtered. Now that filter is thinner, and the burden has shifted to the individual asking the question.

To use AI well, one must treat it a little like a very confident but occasionally unreliable narrator — fascinating, often illuminating, but not to be taken entirely on its own authority. Claims that matter should be checked. Citations should be verified.

Remember the Evil Queen in Snow White? Her magic mirror assured her, year after year, that she was the fairest in the land — until the day it transferred that honour to her stepdaughter. Enraged, the Queen set out to destroy Snow White, and paid dearly for her recklessness.

Her mistake was not owning a magic mirror. It was that she stopped looking at anything else. She let the mirror become her only window onto the world until it finally told her something she could not bear to hear. It was that dependence, more than any single piece of bad news, that destroyed her in the end.

We would do well to keep a few other windows open.